Claude agents learned to dream and self-improve

This week the AI coding story is not “one more chatbot inside an editor.”

It is something much bigger: agents are getting their own memory, their own workspaces, their own tools, and their own security problems.

That is the shift.

🔥 The Big One

Claude’s new dreaming feature is how agents start improving between runs.

This is the story that deserves the cover.

Anthropic just introduced dreaming for Claude Managed Agents — a background process where an agent reviews previous sessions, identifies patterns, cleans up memory, removes outdated context, and improves what it carries into future work.

That sounds weird until you think about the biggest pain with AI agents today: they are powerful in the moment, but they forget too much. You explain the repo, the conventions, the edge cases, the mistakes from last time… and then the next session starts from scratch.

Dreaming changes that. It points toward agents that do not just execute tasks, but learn from previous runs. Not model training. Not magic. A managed memory layer that can be reviewed, curated, and reused.

That is a huge unlock for coding. Imagine a dev agent that remembers the architectural decisions it got wrong last sprint, the test patterns your team prefers, the files it should never touch, and the repeated review feedback it keeps receiving.

This is where agents stop feeling like disposable prompts and start feeling like long-term teammates.

“Dreaming creates something closer to long-term memory.” — Forbes

⚡ What shipped this week

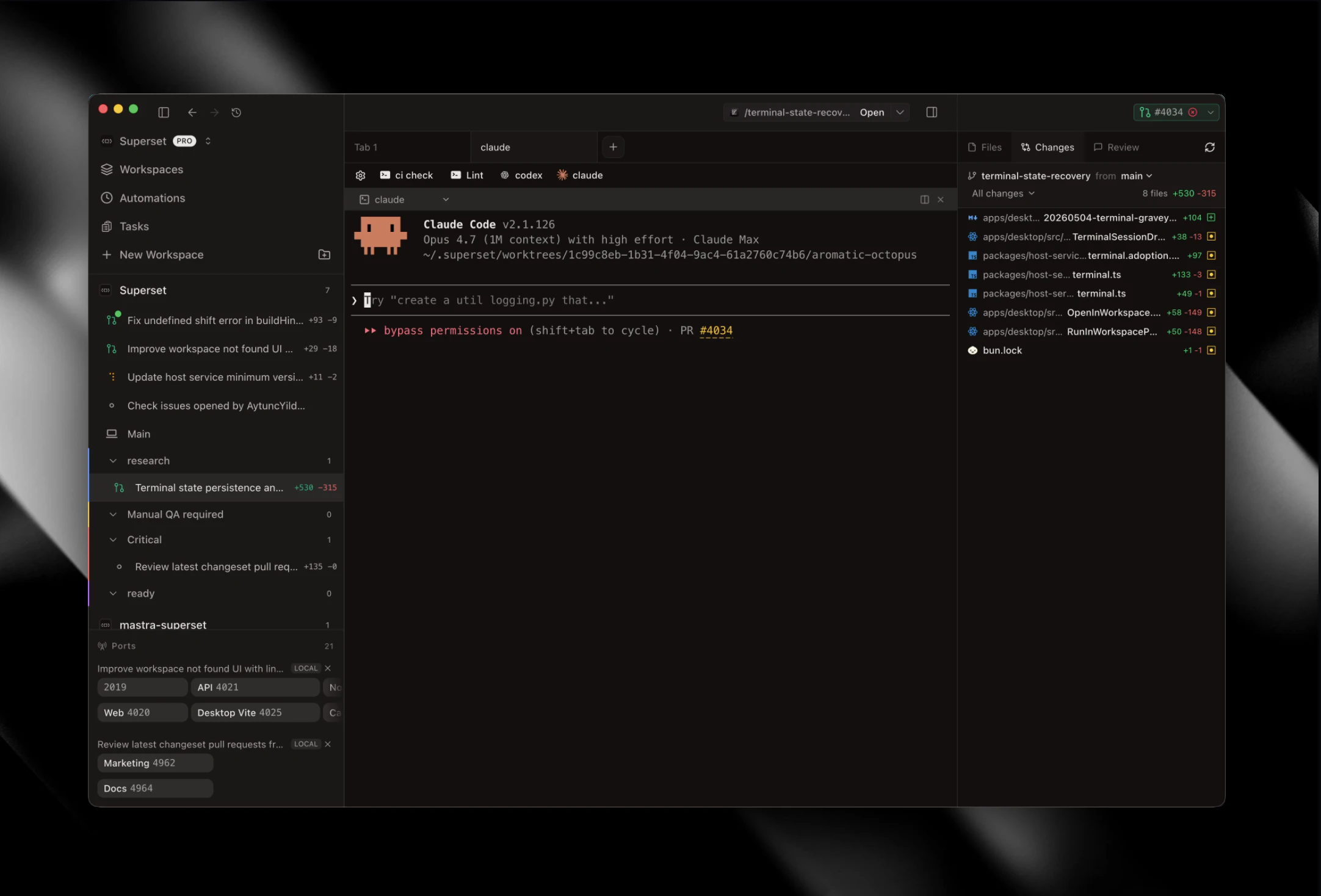

1. Superset 2.0 turns coding agents into parallel teammates.

Superset 2.0 launched on Product Hunt this week with a simple but powerful promise: run 100s of coding agents on any machine from anywhere.

Not one agent in one chat window. Superset is pushing toward a world where you can spin up multiple CLI agents, offload them to different machines, share remote workspaces with teammates, and manage parallel coding work like an operating system for software teams.

Product Hunt agreed. Superset 2.0 pulled 460 votes and 47 comments this week. The signal is clear: builders do not just want smarter agents — they want a better way to run them.

“Run 100s parallel coding agents, offload them to different machines.” — Product Hunt

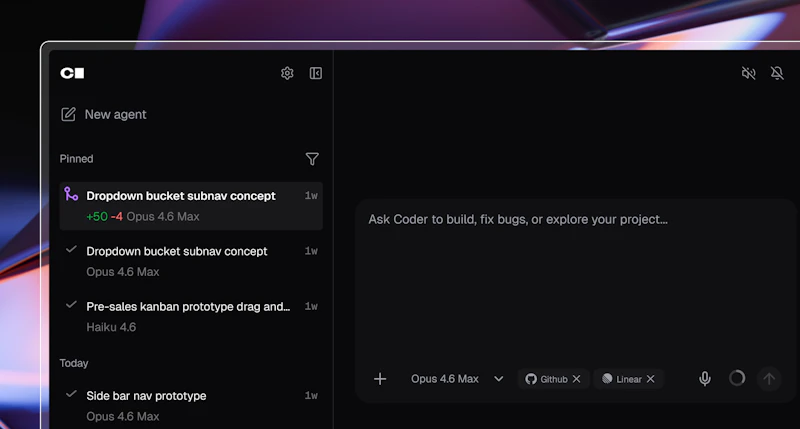

2. Coder launched self-hosted, model-agnostic coding agents.

Coder Agents launched in beta with a very enterprise-friendly angle: run the agent control plane, orchestration, and execution inside your own infrastructure.

This is where serious companies are heading. They want the productivity of agents, but they also want control over repos, networks, logs, and governance. The future of AI coding is not just model choice — it is execution control.

“Coder Agents removes that tradeoff.” — Coder

3. GitHub Copilot in VS Code is becoming an agent runtime.

GitHub shipped a stack of Copilot upgrades in VS Code: semantic workspace search, repo/org search, terminal access, browser awareness, inline diffs, remote CLI steering, and bring-your-own-key support for teams.

That sounds like a changelog, but the bigger point is this: Copilot is moving from “assistant in the editor” to agent runtime inside the development environment. Once an agent can read terminals, inspect browser state, search your codebase semantically, and continue work remotely, the IDE becomes command center.

“Agents can read from and write to existing foreground terminals.” — GitHub Changelog

4. A Cline Kanban flaw exposed the scary side of local AI tools.

This is the story every AI builder should pay attention to.

A critical Cline Kanban vulnerability reportedly allowed malicious websites to connect to unauthenticated localhost WebSocket endpoints. The result: attackers could potentially access workspace data, inject terminal commands, or kill active agent sessions.

That is brutal — but also useful. It reminds us that localhost is not a magic security boundary. If your AI tool opens a local server, browser pages may be closer to your dev environment than you think.

“Localhost is not a magic security boundary.” — Infosecurity Magazine

5. Airbnb says AI wrote 60% of its new code in Q1.

Airbnb CEO Brian Chesky said AI wrote 60% of new code produced by engineers in Q1 2026.

Take the number with the right framing — this is a company claim, not an independent code-quality audit. But still, it is a huge signal. Big companies are not just experimenting with AI coding anymore. They are reorganizing engineering output around it.

“Where you might have needed a team of 20 engineers before, an engineer can now spin up agents.” — TechCrunch

🧰 Worth your time

-

Monid 2.0 — “OpenRouter for agent tools.” Agents need tool access, discovery, and controlled usage just as much as they need better models.

-

Graphbit PRFlow — AI code review focused on catching meaningful issues instead of spamming PRs with noise. This is where AI review becomes useful.

-

Kanwas — An open-source brain for teams and agents. The context layer is becoming as important as the model layer.

-

Vercel Firewall rules with natural language — Describe the traffic rule you want, and Vercel generates it. Security tooling is becoming more agent-friendly too.

My weekly message to YOU!

The pattern this week is clear: AI coding is moving from prompts to systems.

One agent is useful. Ten agents are chaos unless you have the workflow around them: memory, context, machines, logs, permissions, review, and a clear place for the output to land.

So here is the challenge: do not just test another AI coding tool this week — design one repeatable AI workflow.

Pick one thing you do every week. Research, PR review, bug fixing, test writing, content generation, whatever. Then ask: what would this look like if an agent owned the first draft and you owned the final decision?

Reply and tell me: what is the first workflow you would trust an agent with?

I read every single one.

Talk soon PAPAFAM,

Sonny 👋🏼

👇🏽 Don't forget to follow me across socials!

Responses