GitHub just changed how teams use AI agents

I used to think the AI coding race was mainly about which model wrote the best function.

This week made it obvious: the real race is who can turn agent work into something teams can trust, review, and measure.

🔥 The Big One

GitHub Copilot is becoming an async teammate — and now teams can measure the work.

GitHub's Copilot cloud agent is moving beyond simple pull-request workflows. That is the important shift. It can now research, plan, work on branches, and delay PR creation until the work is ready for review.

This matters because GitHub owns the place where code already lives. If Copilot becomes an async teammate inside your repo, the value is not just faster coding. It is work happening in the background while you are not sitting there prompting it.

The bigger story is measurement. GitHub also added cloud-agent fields to the Copilot usage metrics API, including whether a developer used the Copilot cloud agent. That means agentic coding is moving from “cool demo” into something engineering leaders can track across teams.

And that matches what we saw across Product Hunt this month: Waydev measuring the full AI SDLC, Bench for Claude Code recording agent sessions, Claude Usage Tracker showing real usage across tools, and Cursor 3 turning the IDE into a workspace for managing multiple agents. Everyone is converging on the same question: when AI agents write code, how do we know what actually happened and what actually shipped?

"Copilot cloud agent… is no longer limited to pull-request workflows." — GitHub

🛠️ What I built this week

This week's pattern was simple: stop treating AI coding like chat, and start treating it like a system.

-

Agent review loop — I mapped a workflow around Bench for Claude Code so every serious agent run has a replayable audit trail, not just a final diff. If you cannot see how the agent got there, you cannot properly trust what it shipped.

-

AI SDLC dashboard idea — Waydev and Claude Usage Tracker both point at the same gap: teams are using AI everywhere, but most cannot connect tool usage to shipped output. Adoption is easy. Measurement is the hard part.

-

Context-tax cleanup — Google Workspace CLI and Edgee both attack wasted tokens from different sides: one avoids bloated tool definitions, the other compresses prompt noise. The best agent stack is not always the biggest one. It is the cleanest one.

"If your AI workflow only works when you personally babysit it, you have not built a system yet. You have built a very clever distraction." — Sonny

⚡ What shipped this week

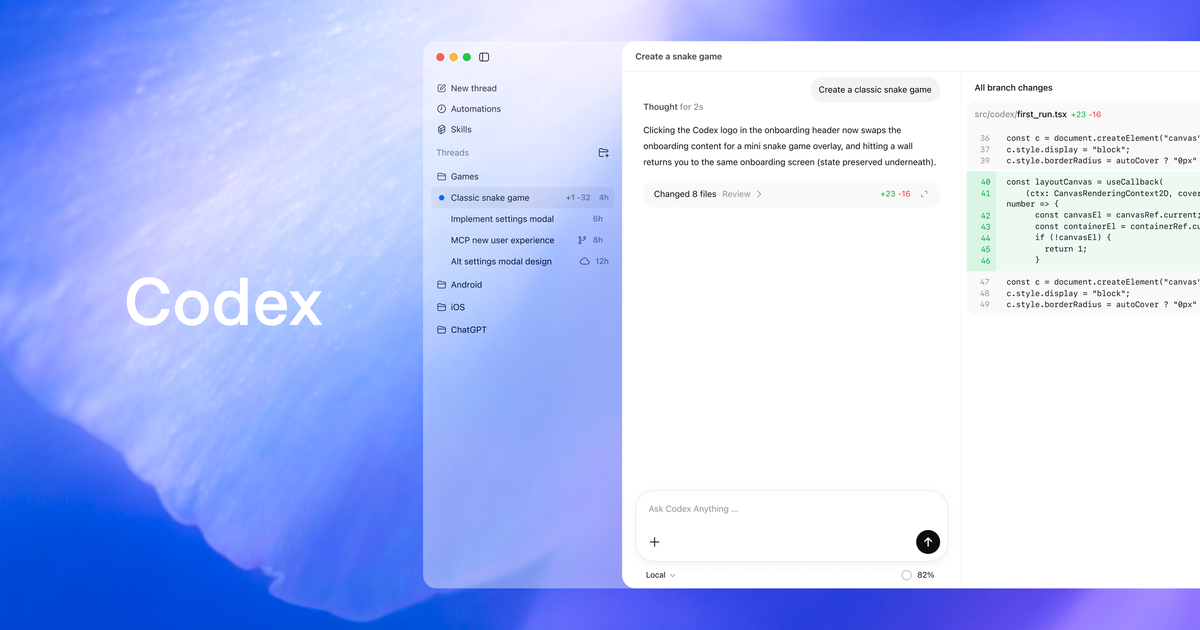

1. Codex CLI is quietly becoming an agent operating layer

OpenAI's Codex CLI changelog looks small until you read the details. Version 0.125.0 adds stronger app-server support, multi-agent relationships, rollout tracing, and session-level visibility.

That matters because OpenAI is not just shipping a CLI anymore. It is building the plumbing for agents that inspect files, run commands, write code, coordinate across steps, and explain what happened afterwards. The model is only one piece. The harness is the product.

"Rollout tracing now records tool, code-mode, session, and multi-agent relationships." — OpenAI Codex Changelog

2. Claude Opus 4.7 doubles down on long-horizon coding reliability

Anthropic launched Claude Opus 4.7, and the positioning is exactly what you would expect: better advanced software engineering, especially on the hardest tasks.

This is where Claude still has a real lane. Not just "write this component." More like: understand the repo, make the change, keep context, avoid breaking the wrong thing, and survive the boring middle of engineering. Long-horizon coding reliability is becoming Claude's moat.

"Opus 4.7 is a notable improvement on Opus 4.6 in advanced software engineering, with particular gains on the most difficult tasks." — Anthropic

3. Cursor 3 reframes the IDE around fleets of agents

Cursor 3 is a useful supporting signal for the same trend: AI coding is becoming agent management. The launch pulled 292 Product Hunt votes around a clear idea — one workspace for parallel local and cloud agents, MCPs, browser testing, and handoffs.

The reason I would not treat it as the only story is timing: Cursor 3 has been out for a few weeks. But as context, it is perfect. GitHub, OpenAI, Google, Anthropic, and Cursor are all racing toward the same endpoint: the IDE as an agent control room.

"We’re introducing Cursor 3, a unified workspace for building software with agents." — Cursor

4. Google Antigravity is Google's answer to the agentic IDE race

Google introduced Antigravity as an agentic development platform, and the wording matters: platform, not editor.

That is the same direction as Cursor 3, Claude desktop, and Codex. The winning tool will not simply autocomplete your code. It will coordinate the whole building process: files, terminal, browser, tests, agents, reviews, and context. Google is not chasing the old IDE category. It is chasing orchestration.

"Antigravity isn’t just an editor—it’s a development platform." — Google Developers Blog

5. Next.js MCP makes frameworks readable by agents

Next.js 16+ now includes MCP support so coding agents can access your app internals in real time.

This is one of those updates that sounds boring until you actually build with agents. The less your agent has to guess, the better it performs. Frameworks exposing live structure, routes, errors, logs, and app context means agents can move from "text prediction over your files" to software work with actual runtime awareness.

"Next.js 16+ includes MCP support that enables coding agents to access your application’s internals in real-time." — Next.js Docs

🧰 Worth your time

-

Bench for Claude Code — Records Claude Code sessions so you can replay tool calls, file changes, reasoning traces, and subagent steps. If your agent run breaks something silently, this is the kind of audit trail you want.

-

The New Waydev — Measures the full AI software development lifecycle from tool usage to production outcomes. The important shift: teams are moving from "who uses AI?" to "what actually shipped because of AI?"

-

Claude Usage Tracker — Free, open-source macOS app for seeing Claude usage across tools like Claude Code, Cursor, Claude Desktop, and Cline. Very useful if your AI stack has quietly become five tools pretending to be one workflow.

-

Google Workspace CLI — A CLI for Gmail, Drive, Calendar, Sheets, Docs and more, built for humans and agents. The killer angle is avoiding the MCP "context tax" by giving agents clean commands instead of dumping massive tool definitions into context.

My weekly message to YOU!

This week, do not just try another AI tool because everyone is shouting about it.

Pick one part of your workflow where AI feels messy: code review, debugging, testing, context, costs, or handoff. Then improve the system around it.

Take one action this week: make one agent run more observable, more repeatable, or easier to trust.

That is how this compounds. Not by collecting tools like Pokémon cards. By turning the chaos into a workflow you can run again tomorrow.

What is the messiest part of your AI coding workflow right now?

I read every single one.

Talk soon PAPAFAM,

Sonny 👋🏼

👇🏽 Don't forget to follow me across socials!

Responses